Artificial intelligence opens up new, fascinating possibilities – unfortunately also for cybercriminals. They are increasingly using so-called deepfakes: AI-generated fakes of videos, images or voices that are used in the work environment for deceptively real attacks.

Deceptively real videos, images and voices can now be created in just a few minutes thanks to artificial intelligence. For cybercriminals, deepfakes have long been a tool to damage companies – whether through fraud, extortion or targeted manipulation. A recent analysis by Surfshark shows how serious the situation is: In the first quarter of 2025, there were already 19% more deepfake incidents than in the whole of 2024.

Our case study makes it clear how such attacks can work – and what you can do to be prepared.

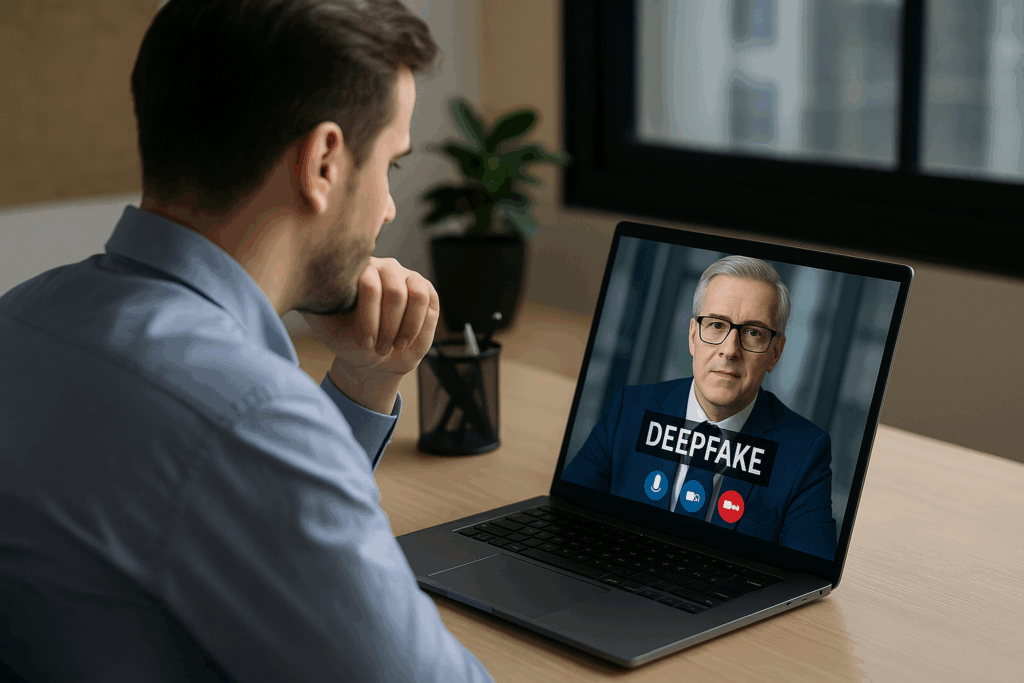

An accounting employee receives a video call from his managing director. A situation that occurs very often in everyday work. Voice and face seem absolutely familiar.

The “boss” emphatically explains that a Japanese business partner urgently expects a six-figure payment, which must be transferred today. An important deadline had been missed.

Under time pressure and with the authority of the supposed superior, he asks the employee to carry out the transfer immediately and sends the account data at the same time.

What the employee doesn’t know is that the call is a deceptively real deepfake that criminals use to specifically try to divert company funds to a fraudulent foreign account.

How do deepfakes arise and what technologies are behind them?

How do cybercriminals use deepfakes to deceive companies and employees?

What tips do our experts give so that companies can reliably detect deepfakes?

What measures are necessary to effectively protect against such attacks?